The Real AI Bottleneck

Hyperscalers are bypassing the grid, and we should benefit from it

This isn’t a new subject, this isn’t even the first time I have written about this, but this time is a bit more real as what was a narrative a year ago has now transformed into real spending and cash generation for the companies providing the right service.

Electricity & Datacenters

This won’t be a surprise if I were to tell you that data centers run on electricity, right? Hardware must be plugged into a power source, while GPU consumption is usually higher than average hardware consumption. As a rack will include dozens of GPUs and a data center dozens of racks plus cooling systems, ventilation, etc… Well, an AI data center will consume more than a traditional data center.

It’ll actually consume a sh*t ton of electricity, pardon my French.

If you want some comparison, a U.S. family of four consumes ~13,000 kWh per year on average, slightly more than 1,000 kWh per month, and they do not consume 24/7 as kids go to school, parents to work and all of them sleep at night - keep this in mind as it’ll create a problem.

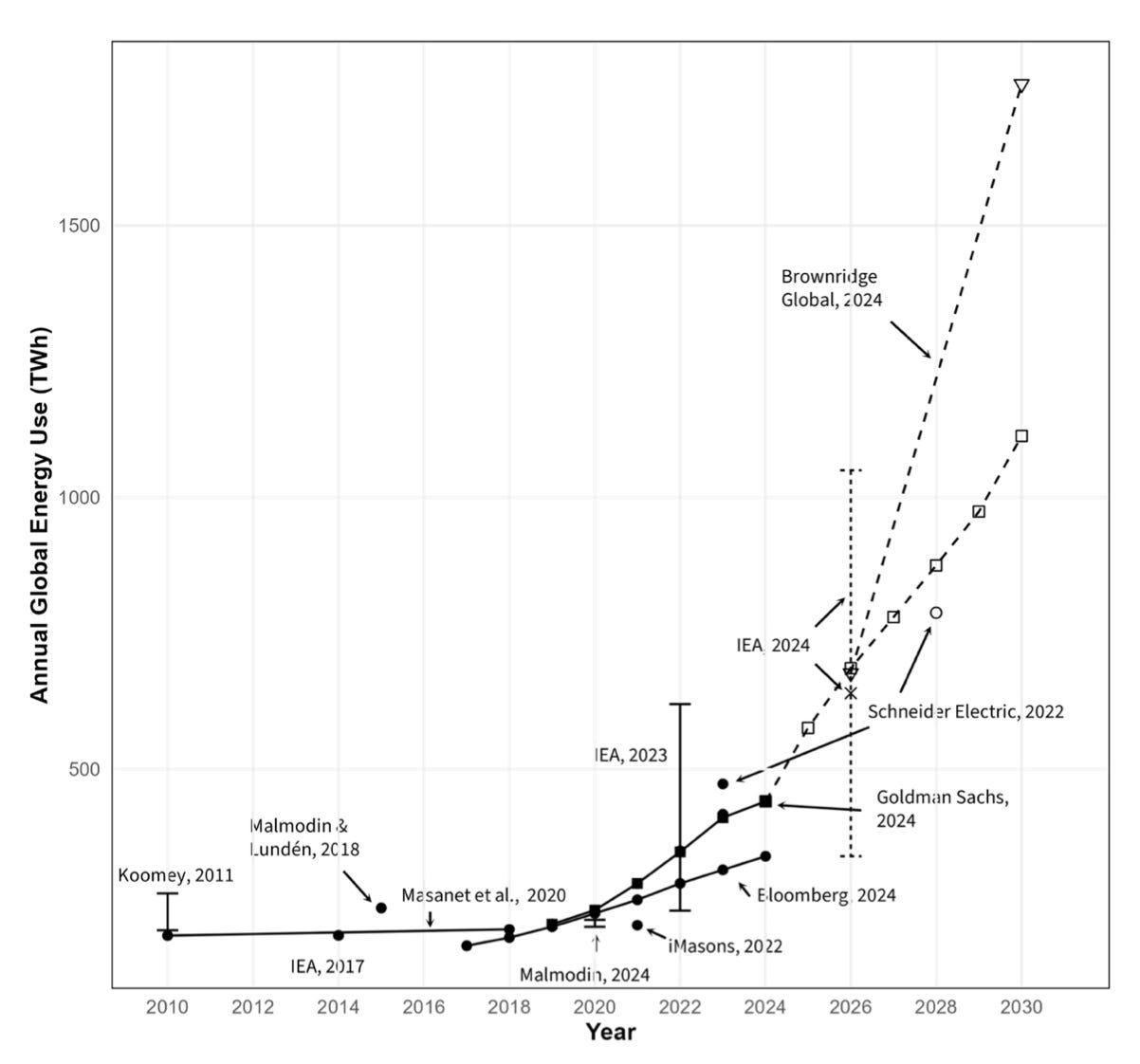

Hardware doesn’t sleep, it is on 24/7. Today’s AI data centers have a need for triple-digit MW and we are already building GW data centers. Today’s assumptions for AI data centers are between 175 and 200 TWh per year - not just including AI but all data centers, which is ~5% of total U.S. generation or ~13.85M families of four.

With the new GW monsters, some assumptions talk about doubling to tripling this consumption by 2030, consuming between 12% and 17% of total U.S. electricity.

This is our first problem: we do not have enough. If you wonder how that’s an issue, I’ll frame the situation differently.

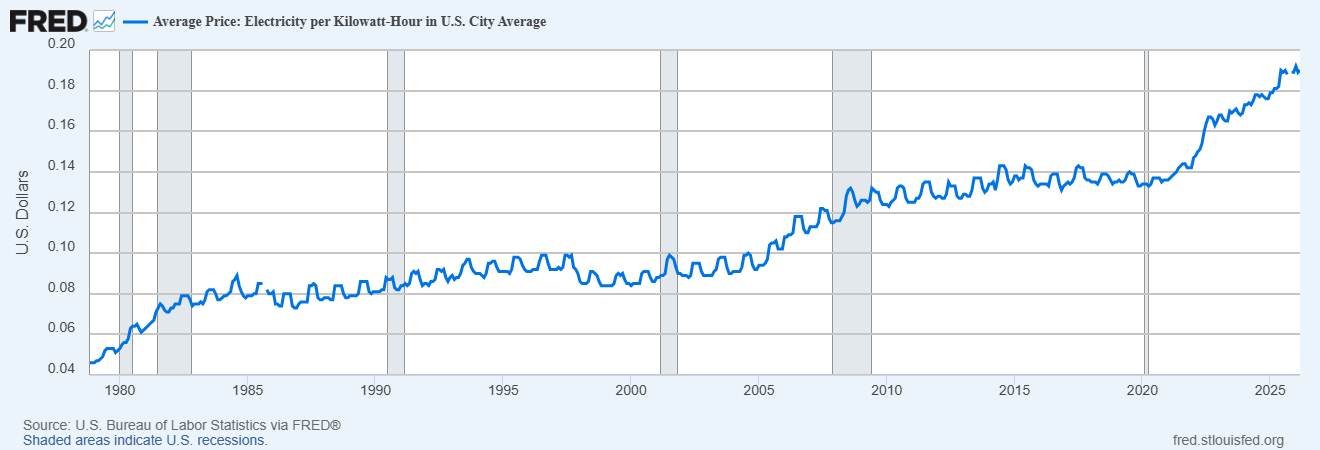

Electricity generation is limited. If AI data centers take it, someone else doesn’t have it. When there is a fight for a commodity, it usually goes to the one who pays the most for it, increasing the commodity price for everyone else. And Mr Dupont won’t be able to compete with Microsoft, so global electricity prices will rise due to demand/supply market mechanisms.

AI isn’t the only reason for electricity costs to rise, let’s not generalize, but it is part of it and will grow in proportion as the new monsters are built.

Now, we have to talk about the grid. I covered the topic and how that works here, you should take a quick read to understand this concept - it’s important. In a nutshell.

Going a bit further into electricity now. The grid is the name given to the electrical system of a city or a country, from producers to end-users. It is an incredibly complex piece of engineering, constantly hanging by a thread as its production has to equal consumption at any time. The entire infrastructure could overheat & burn if this weren’t the case - or at least be damaged.

As I shared earlier, data centers are online 24/7 but the grid doesn’t have the same demand 24/7. The need for electricity fluctuates depending on the month but also the hours, with a base load - constant demand, and variable consumption - households or temporary needs like climatization or cooking.

This is our second problem, electricity generation has to fluctuate during the day and increase at peak load while grids are meant to generate more than this peak load, having new giants plugged to it, adding massively to the base load, might reach production limit and create supply issues. This kind of is related to our first problem, so let’s call it a first and a half issue.

The second half of this issue would be that the U.S. grid hasn’t been upgraded for decades and uses inefficient/outdated infrastructure, for production and transport. And you guys know we don’t like inefficiency when it comes to AI lately, where everything must be optimized.

Because most data centers are plugged into the common grid, they use the same infrastructure and compete for the same electricity as Mr Dupont, and this is a major problem, one which data centers try to resolve for two main reasons.

They want access to reliable and cheap electricity without external impacts, to be able to manage their operations as they see fit.

Political pressure is growing as it cannot be tolerated for data centers to consume more than 10% of U.S. production and impact households’ prices.

Our third problem comes from electrical engineering and the AC/DC currents. I won’t go into the details, but the overall concept is pretty easy to understand - as is the problematic it creates.

The difference between alternating and direct current is how they flow; the first alternates directions based on frequency while the second is flowing straight from A to B. What matters to understand is that DC is more stable and optimized for electronic usage - data centers need DC. AC used to be easier to manipulate and transported over long distances, and our infrastructure was built using it and is too expensive to change now, which leaves us a legacy system while new technologies like HVDC could replace it, but won’t - that’s political.

Current can be converted between AC/DC with specific hardware – rectifiers/inverters, but there is a tax to it in the form of lost energy which is estimated to be between 10% and 15% by specialists. Every time current is converted from one form to another, energy is lost. When we talk about GW data centers, a 10% conversion loss cannot be tolerated in terms of added costs – we are talking tens of millions of dollars easily, not including the systems required to cool the heat generated by this loss.

In a nutshell, the problem is simple: we do not generate enough energy today to meet AI’s needs, current infrastructure is old and inefficient and has to be optimized just like compute has to be. This can be fixed in three ways.

Generate more electricity.

Store energy outside of peak load.

Build auto-sufficient systems for AI data centers.

But our biggest problem is different and justifies the accelerating spending on new energy solutions today. It isn’t technical, but temporal. Data centers need an efficient solution now, today. Not in two years. They need to power their expansions which aren’t being built at a bureaucratic pace but at a private company pace, and cannot wait for grid upgrades.

And that’s what we are going to talk about today. The narratives, the technical, the opportunities and how to capitalize on them, right now.